Table of Content:

Table of Content:

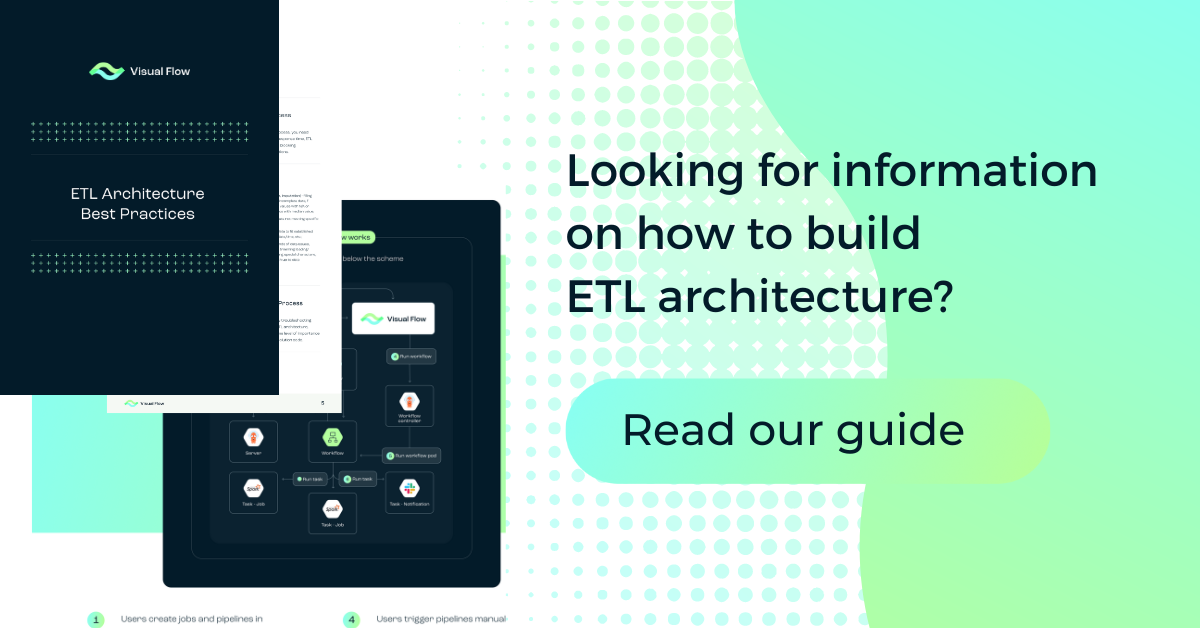

ETL is a key component of the data integration process, which allows moving and transforming data between its source and target destinations. However, building a powerful and advanced ETL architecture can be quite a challenge.

Let’s talk about the essentials of an ETL architecture, what challenges there are when setting one up, and, most importantly, which ETL architecture best practices and principles you should pay attention to. Keep reading to learn all about achieving a perfect ETL architecture.

What is ETL Architecture?

ETL stands for extract, transform, and load. This is a general term for all processes related to data migration from source channels to required target environments, transforming it into a convenient structure, and uploading it to the destination repository. Other related terms are export, import, data conversion, file parsing, and web-scraping.

An ETL architecture maps out how your ETL processes are implemented from start to finish, which is based on several key ETL steps:

- Data extraction. The ETL process starts with defining the required types of data and extracting this data from different sources.

- Data transformation. This stage ensures that the data is converted into the correct form and structured before being moved to the storage.

- Converted data load. At the final stage, the data is transferred to the storage, data lake, data warehouse, or database, which is the target endpoint repository.

ETL Architecture Challenges

ETL architecture development and implementation go hand in hand with certain complexities and ETL challenges. Let’s take a look at the most common and frequently encountered problems during data manipulations in terms of the ETL process.

Data Security and Privacy

A large amount of data in an ETL architecture is private and confidential. This is why the underlying procedures for managing and processing consumer data are strictly regulated and monitored. Absolute compliance with all standards and requirements when processing consumer data is critical. This means that the ETL architecture must fully guarantee the reliability and security of data at every stage of an ETL project.

Poor Performance

Extraction, transformation, and load processes are often highly time- and resource-consuming tasks. And it is the “transformation” stage that makes a performance issue in most cases, affecting most negatively the overall speed of pipelines. Optimizing data processing speed and achieving the highest possible ETL efficiency should be one of the key priorities when building an architecture diagram. An excellent solution here can be the use of parallel and distributed processing, step-by-step data load, and splitting large elements into smaller ones.

Difficulties with Adaptation to Changing Business Needs

ETL pipelines must be kept sustainable, persistent, and flexible. Custom ETL architecture development can become a great solution to improve the flexibility of the data extraction procedure. On top of that, it is best to work with a platform that supports numerous end-to-end integrations. The adaptable ETL architecture facilitates the process of switching data sources and simplifies the reorganization of the ETL platform.

Data Latency and Volume

When an enterprise needs to make fast data-driven decisions, it is necessary to extract data at lower latencies. And here it is a matter of choosing between extracting older data with low latency and using powerful resources needed to achieve high latency.

The volume of data to be retrieved is a very important parameter. When quality is enhanced, small amounts of data scale badly. Parallel extraction is a solution for working with large volumes at optimal latencies. These processes are complicated and difficult to handle.

Organization of the Data Extraction Process

Orchestration and planning are critical processes. Data extraction should be done at a specific time, considering the volume and quality, latency, and limitations. This can be tricky to achieve when introducing a hybrid model.

Working with Data from Disparate Sources

Working with different sources complicates data management processes. Disparate sources cause an increase in the data management surface as the requirements for orchestration, tracking, and error correction enhance.

11 ETL Architecture Best Practices

Let’s explore modern ETL architecture best practices used in building ETL architectures that work for your business goals.

Consolidation of Data from Different Sources

One of the most standard and common scenarios is when we have various data sources and we need to make data from all of them “friendly” for extraction and further processing. Timely data consolidation is one of the streaming ETL architecture best practices, which is carried out with the help of a specialized tool and is comprehensively implemented in the Visual Flow’s functionality.

Data Quality Check and Possibility of Regular Data Enrichment

This is one of the good ETL architecture best practices, which concerns not only the quality of data but also filtering, structuring, and implementation of data relationships between different assets. Typically, ETL pipelines fetch and load more information than necessary. And a large amount of big data can negatively affect the speed of processes.

This is why it is necessary to optimize extraction, perform transformations, and filter out unnecessary and repetitive data. In addition, an important task of each ETL framework is to provide the possibility of regularly supplementing data, that is, adding new assets to the system.

In Visual Flow, checking the quality of data (including when connecting a new source), their structuring, filtering, and interconnection are out-of-the-box opportunities while you may also regularly replenish data according to certain rules, for example, according to a schedule.

Optimization of Your ETL/ELT Workflows (Performance & Scalability)

This is one of the best practices and principles for ETL architecture which goes beyond the standard ETL solution and is a significant advantage and feature of the Visual Flow approach. In terms of ETL performance and scalability, Visual Flow definitely wins because it has Spark under the hood.

Here we are dealing with an infrastructure that is deployed on the fly when we need to run our application (as Spark and Kubernetes capacities lead to auto-scalable workflows) in contrast to standard ETL methods where the load on the server increases significantly as data grows.

A Large Number of Prebuild Transformations

Prebuild transformations are not only very important in the context of big data ETL architecture best practices, but also not very difficult to refine in terms of implementation. A large number of such transformations, including aggregation functions, can be scaled constantly in Visual Flow.

Metadata Processing

Metadata is essential to data mapping and it needs to be laid down at the architectural level. The processing, storage, and convenient access of metadata during the development of ETL/ELT (extract, load, transform) architecture processes is quite feasible, but it is voluminous. Without metadata, only the person who designed the process would understand it. The metadata processing practice is currently not available in Visual Flow but is coming soon.

Connection Manager

Connection management is an architecturally important point in terms of real-time ETL architecture best practices that greatly affects the usability of the ETL development process. If an ETL framework lacks a connection manager, forcing developers to specify new parameters each time when creating a process, this will significantly affect the convenience of work.

A connection manager is an interface that stores configured custom connection parameters to a direct data storage. It helps developers improve their work performance and avoid mistakes while configuring the parameters each time. These parameters are kept in an encrypted format and can be reused for several ELT processes. This practice is not currently implemented in Visual Flow but is coming soon.

Easy Access to the History of ETL Logs

Runtime monitoring is a must when we talk about ETL architecture best practices in the ETL development process. In particular, metadata and metadata processes are sorted in order to understand how the entire ETL pipeline works. Easy access to the history of ETL logs in the familiar to an SQL developer standard is a very important part of building a comprehensive ETL pipeline. This history not only helps fix bugs but also collects data-enriching insights.

In Visual Flow, reports are sent by email in the form of Spark logs and a summarized file. This is not the fastest way to access history logs, but works on this feature for the paid version are in progress.

Performance and Status Monitoring

It is important to check the status and access the history of ETL logs in case something goes wrong. In Visual Flow, efficient data analytics performance and status monitoring features are implemented by default.

Recovery Point

There are two aspects to this best practice of ETL architecture. The first is the technical one, which lies under the hood — when something fails before data processing is initiated. The second is when the processing kicks off and the failure occurs somewhere at the data load stage.

In this case, if the process crashes, nothing needs to be loaded. The option with partial data load is not suitable because you will still need to process the entire amount of data the next time you attempt to load it.

In Visual Flow, auto-restart is implemented to tackle such issues. Three recovery points are created to avoid failures that occur before the start of the job, those that occur after the start of the job, plus a stable performance recovery point that we need to roll back to when we do not need to rerun a process that doesn’t handle data correctly.

Error Alert Service to Change Code in Debug Mode

Error alerts during ETL are critical to help resolve errors in a timely manner. In Visual Flow, this ETL pipeline architecture best practice can be employed using Spark. Mechanically, this process can be described as follows: the job stops in a specific place, which is displayed in a separate window in the UI. Standard ETL tools do not support this and are unlikely to in the near future.

Version Control

Having a clear display of how versions are controlled during the ETL is a must. The lack of one is a classic issue of all visual ETL tools. Version control is essential to tracking, organizing, and controlling all data changes taking place. Without version control, even a powerful and advanced ETL tool is insufficient. By the way, Visual Flow has GitHub integration, so you can be sure of our product’s relevance.

How Can We Help?

Visual Flow is a cloud-native advanced ETL tool with a hassle-free user interface that brings together the best of Kubernetes, Spark, and Argo Workflows. The purpose of this combination is to provide a comprehensive tool for an ETL developer who doesn’t want to code based on the unique possibilities of Spark and Kubernetes technologies. On top of that, Visual Flow is the best solution for CTOs and architects looking to facilitate and streamline workflows in teams they manage.

Our ETL tool becomes necessary at the stage ETL processes pile up and run in bulk, no matter their complexity. Yes, the classic Python+GitHub+Airflow chain can easily tackle similar tasks, but you need people with knowledge of all those tools and fitting tech resources for that

Visual Flow is designed to make an ETL developer’s life simpler. Contact us and experience it for yourself.

Conclusion

The development and implementation of a perfect extraction, transformation, and load solution should run on essential architecture features as well as ETL best practices. Combining all of the above practices and concepts in a custom solution is challenging even for experienced developers. And Visual Flow may just fix the situation here. Visit our website to learn more and achieve your ideal ETL architecture.

FAQ

ETL architecture covers three key steps: extraction, transformation, and load. The extraction phase unloads data from source systems as quickly and as lightly as possible. The data transformation and load stages are about integrating and moving integrated data to the final target destination.

The most common and best practices for ETL architecture are the following: consolidation of data from different sources, data quality check and the possibility of regular data enrichment, optimization of your ETL/ELT workflows (performance & scalability), a large number of prebuilt transformations, metadata processing, presence of a connection manager, easy access to the history of ETL logs, performance and status monitoring, recovery point, error alert service to change code in debug mode, and version control.

When organizing streamlined ETL processes, certain conditions must be met and certain features must be achieved. On the one hand, each process must have a specific goal, and the focus must remain precisely on achieving it. On the other hand, minimizing touchpoints means that the data for each source should be processed in as few steps as possible. To achieve both of these goals, an ideal ETL pipeline also requires symmetry, identification, and pattern reuse.

Contact us