Table of Content:

Table of Content:

ETL performance in the data integration process is a crucial aspect of nearly any business functioning. It impacts the quality of data gathered from the business interactions of a company and, consequently, the ability of managers to make informed business decisions.

From our experience, the contribution of ETL and other business intelligence (BI) tools is valued by many companies’ managers.

So, the long-running job of the data engineer is to make sure ETL performs smoothly and enhances a company’s business operations through advanced data analytics.

The Impact of Poor ETL Performance

Let’s look at the potential consequences of inadequate ETL performance:

- Performing independent data verification and validation becomes impossible.

You naturally want to receive heterogeneous data resources, as no advanced analytics is possible when forms of data are mixed. However, if ETL is malfunctioning, the tools you use to verify and validate the information would reject a significant portion of the data.

It happens because the validation tools start finding logical errors in extracted sets of data, and verification tools will fail to compare it against the validated sources. So, even if you review sets of data in person and find it appropriate, you will have to omit the validation part, leading to more data errors.

- Inaccurate representation of business processes.

Decreased performance of ETL is a frequent cause of receiving non-accurate overviews of data. In the best-case scenario, data extracted, transformed, and loaded in a data warehouse, would not be complete. But, it is still possible to use it to understand how the business has been operating for the past period.

However, it also may be that ETL will work so slowly that the time to process and gather all the relevant data will exceed the time limitations. This would leave managers unable to analyze the reports for business processes and perform reactive measures.

- Inability to make informed business decisions.

ETL provides managers with a consolidated view, helping them analyze information gathered in the data warehouse. For this purpose, some specialists use analytical and reporting tools. Anyway, initiatives and informed business decisions are well-grounded on the data previously processed by an ETL solution.

In case an ETL fails to perform as expected, managers receive inaccurate reports, for example, dashboards that are not logically correct. It could have happened, for instance, because different types of data were mixed. As a result, reports are of no use, and no strategic vision can be received.

8 Ways to Improve Your ETL Performance

Given the complexity of a proper ETL software setting, consider some tips to improve your ETL performance.

Process Evenly Sized Files with COPY Command

One of the most simple and effective ways to improve the ETL performance is to divide data nodes into slices with dedicated cores. The goal is to give each slice an equal amount of work. This will help evenly distribute the processing capacity and prevent the overloaded slices from slowing down the process.

The exact number of slices depends on the node type. For example, DS2.XLARGE compute node can be divided into two slices, while DS2.8XLARGE has 16 slices.

Use Workload Management

ETL performance improvement measures necessarily include defining multiple queues with different workloads. It is needed to prevent ETL runtimes from becoming inconsistent.

For this purpose, create a queue dedicated to the ETL process and configure it with five or fewer slots. It will help to mitigate the excessive use of COMMIT. Then, claim extra memory that is available in a queue with the help of the “wlm_query_slot_count” command. Finally, create a separate queue for reporting ones, and do not forget to set up the dynamic memory parameters.

Organize Parallel Execution

If you experience sluggish data loading, this advice on how to improve the ETL performance may be the solution to your issue. Run ETL jobs in parallel, i.e., execute SQL statements with parallel processes. Such execution can be performed for conventional queries and DDL commands.

You may also use this feature for DML statements. For this purpose, you need to enable parallel execution with an “Alter session enable parallel DML” command. You also need to synchronize the parallel degree of all tables and adapt the database configuration.

Additionally, you should prevent the optimizer from deciding whether to execute in operation parallel mode or not. For this purpose, use commands “+parallel” and “no_parallel.”

Reduce and Optimize Datasets at Early Steps

To speed up and improve ETL performance even higher, you need to reduce the amount of time the optimizer spends joining the relevant rows of tables. It is especially vital for commands with complex WHERE conditions, as they make the optimizer read the largest table too early.

You need to join all the small tables first and use the “+ leading” hint to indicate the correct join order.

Get Rid of Unnecessary Indexes

The typical issue is that data warehouses are over-indexed. Indeed, such databases rarely require the use of indexes. Such an approach as creating another index to fix performance problems does not work for ETL jobs. But quite the opposite, it deteriorates an ETL’s functioning even more.

This is because additional indexes slow down the INSERT/UPDATE/MERGE operations. It also makes the program create a Nested Loops Join, i.e., the algorithm for reading rows from the first table in a loop, which you also should avoid. So, the best way to improve the ETL performance is to drop the unnecessary indexes.

Load Data in Bulk

The following tips to increase the ETL performance will help you handle big data better. Since you can store and process petabyte-scale datasets, you need to ensure their efficient transfer.

For this purpose, use a manifest file to merge datasets from multiple files. Also, use temporary staging tables created by the “create temporary table” command to hold the data before the transformation. And use the “alter table append” command to swap data from the staging table to the target one. This way, you load all the datasets at once.

Use Diagnostic Queries Regularly

The core of ETL performance improvements is monitoring your BI tool’s health. Such an ETL strategy will help you identify issues because they have an adverse effect on your cluster. You may use SQL monitoring scripts/diagnostic queries for this purpose. Here are a few examples:

- commit_stats.sql. This command shows detailed queue statistics so you can see the largest queue’s length and time. If there is any abnormality, the INSERT/UPDATE/COPY/DELETE operations likely take more time than they should.

- table_info.sql. This command shows unsorted statistics and storage and key information. This queue is valuable to check if there are any delays in performing transformation steps.

- v_get_schema_priv_by_user.sql. This command generates the schema showing what users have access to what data categories. Use this command in case reporting users can observe unnecessary, intermediate tables in reports.

These are only a small portion of diagnostic queries for ETL performance testing. So, it is useful to create a list of them and run each command regularly or as a reactive measure after detecting an issue.

Gather Statistics on Target Tables

Consider this the last step of an ETL job. In case you handle a complex stage or intermediate table, then missing statistics may lead to poor estimations. Without gathering statistics, several stage tables with outdated info may be joined together, leading to severe inaccuracies. That may result in generating a flawed execution plan. So, increase the ETL performance by reviewing statistics on target tables all the time.

Fortunately, a high-functional ETL solution has nearly all of the mentioned already integrated, so the probability of performance issues is much lower.

How Can We Help?

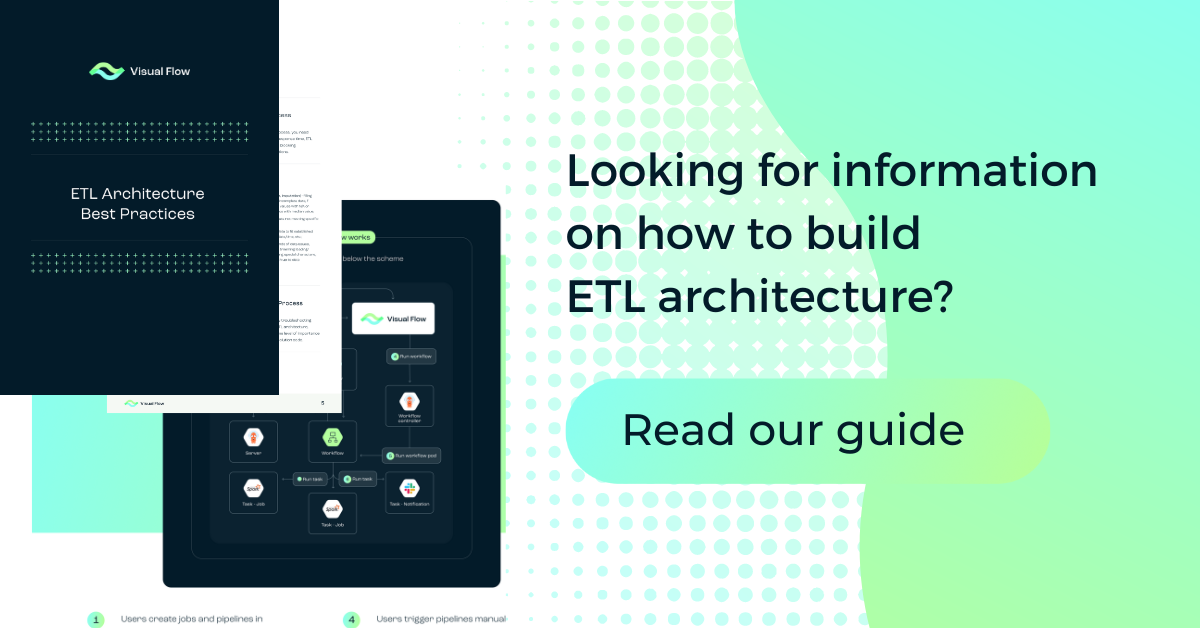

Visual Flow is developed by a subsidiary of IBA Group, offering proprietary software solutions for customers worldwide. Working with IBA Group specialists grants us expertise in multiple niches and essential experience with corporate technologies, BI solutions, and various data sources.

We completed hundreds of projects for small to large-scale companies and decided to develop a unique ETL tool that will streamline your ETL pipeline performance.

IBA Group offers you Visual Flow—a cloud-native, open-source ETL that combines the best features of such well-known tools as Kubernetes, Spark, and Argo Workflows. Unlike competitors, our solution does not require complex optimization as per the advice detailed above. Using the parameter management system, you can set up a digital environment and make design adjustments in 15 minutes only.

As an additional benefit, you do not need a knowledge of any programming language to employ Visual Flow fully. Also, it has the conventional ETL tools simplified and integrated for your convenience. Learn more about how Visual Flow can enhance data processing for your business.

Conclusion

ETL tools are of great importance for a website’s functioning and business operation. However, they frequently fail to perform as expected, leading to difficulty using the data.

There are ways to enhance the ETL operation, but even careful consideration of all the ETL performance tuning tips is not always a way to achieve optimal performance. What is way more important is to use a proper ETL tool that would assist you in many ways.

Consider using Visual Flow—a Cloud-Native ETL solution for your business developed by IBA Group’s subsidiary company. Visual Flow incorporates best ETL practices and combines advantages of competitors. Contact us for more information about the product.

FAQ

The best way to take charge of your ETL pipeline is to employ a corresponding solution and adjust it considering our ETL performance tips.

Either your ETL tool is obsolete, or it is not adjusted properly with paying close attention to ETL performance best practices.

It helps manage and analyze information generated in the business environment, which positively impacts administrative decision-making.

Contact us