Table of Content:

Table of Content:

If you use a data warehouse to maintain your digital infrastructure, you will need to implement ETL (Extract, Transform, Load) tools sooner or later. With their help, you can automate the processes of extracting data from various sources, converting them into a single format, and loading this data into a warehouse.

Despite the wide variety of ETL tools on the market, Python-based solutions hold a special place here, as they are both easy to use and provide effective optimization of the ETL pipeline under the digital workflows in your company.

Below, we have reviewed five of the best Python ETL frameworks that are definitely worth your attention.

What Is the Python ETL Framework?

The Python ETL framework is an environment for developing ETL software using the Python programming language. In general, these solutions provide generic templates and modules that help speed up and simplify the creation of pipelines. It is especially true when software engineers have to deal with large datasets.

Mind you, not so many “true” Python frameworks can be used directly to create Python ETL pipelines. They are diluted by libraries, WMSes, and other solutions that have similar functionality. In some cases, the use of libraries is preferable over others, since it doesn’t bind specialists to a pre-approved structure of the future pipeline.

Top 5 Python ETL Frameworks

And now it’s time to get acquainted with the five and, in our opinion, the best Python ETL frameworks that will make life easier for you and your IT department.

Bonobo

If you don’t have decades of Python programming experience and don’t want to learn a new API to create scalable ETL pipelines, this FIFO-based framework is probably the best choice for you.

In particular, Bonobo provides advanced ETL tools for creating data pipelines capable of processing multiple data sources at the same time. Also, thanks to the SQLAlchemy extension, Bonobo allows you to connect the pipeline directly to SQL databases. Apart from SQL, this solution is also compatible with CSV, XML, JSON, XLS, etc.

This framework is one of the best in terms of ease of use. Here you will find an ETL process graph visualizer, which, together with the Graphviz library, makes process monitoring easier. Also, a detailed guide will come to the rescue, allowing you to start working with this method in 10-20 minutes. As for debugging processes, you just need to move or remove individual pipeline nodes through the GUI.

On the other hand, this simplicity makes Bonobo somewhat limited: as a rule, it is used by small independent teams to work with small data sets. In addition, the analysis of the entire data set is not available, which makes it impossible to use for statistical analysis.

Pygrametl

Pygrametl is a Python framework that allows engineers to apply the most commonly used functions to develop ETL processes. This framework has been regularly updated since 2009.

Pygrametl allows users to create an entire ETL pipeline in Python but is also compatible with both CPython and Jython, so it can be a good choice if your project already has Java code and/or JDBC drivers.

Note that this product provides object-oriented abstractions for commonly used operations such as interacting between different data sources, running parallel data processing, or creating snowflake schemas.

The positive aspect of working with this framework is that there is an excellent manual for beginners, which helps even inexperienced Python developers to cope with it. On the other hand, with a not so large community of pygrametl fans, we can conclude that it is not intuitive enough for those who decide to apply approaches beyond the ones described in the manual.

In general, this Python framework for ETL pipeline will be a good option for production-level data warehousing for large-scale companies.

Mara

If you don’t want to code all the ETL logic manually, Mara might be a good choice for you. It is a lightweight, self-contained Python framework for the creation of ETL pipelines with a lot of out-of-box features. Many say it is the middle ground between simple scripts and Apache Airflow, which will be discussed below.

Mara has a well-designed web interface and CLI that can be inserted into any Flask application. Like other solutions from our top, Mara allows engineers to create pipelines for extracting and transferring data. Also, this Python framework for building ETL uses PostgreSQL as its data processing tool and takes advantage of Python’s multiprocessing package for pipeline execution.

In terms of benefits, Mara can handle large datasets (unlike many other Python frameworks for ETL). On the other hand, if you do not plan to work with PostgreSQL, this product will be useless to you. Please also note that currently, Mara is not compatible with Windows (as well as Docker).

Apache Airflow

We already mentioned this solution above, so note that this is not a standard ETL Python framework but a workflow management system (WMS) that allows you to plan, organize, and track any repetitive tasks, particularly ETL processes.

This is one of the most popular Python tools for orchestrating ETL pipelines. Despite the inability to process data independently, this product can be used to build workflows in the form of directed acyclic graphs (DAG). This approach ensures this solution with excellent scalability characteristics (in fact, this is why Apache Airflow is used by thousands of large companies around the world).

As for managing and editing graphs, it provides you with a convenient web interface for these tasks. If you are more comfortable working through the CLI, you will get a set of useful tools to help.

Why can’t Airflow be considered universal? First, because of the high cost of its implementation. Considering the difficulty for beginners (despite the very detailed and well-thought-out documentation), this product is still more aimed at integration in large companies. And finally, the Airflow functionality may be redundant for some teams, which means they will have to overpay for features they don’t need at all.

Luigi

Luigi is another WMS that allows you to create long and complex pipelines for ETL processes. Like Airflow, Luigi is also designed to manage workflows by visualizing them as a DAG.

This product is easier to use than Airflow, but it has fewer features and more limitations (including the lack of a mechanism for scheduling tasks, difficulties in scaling, the inability to pre-start data pipelines, and the lack of task pre-validation). However, together they embody the best DataOps practices. From the perspective of end users, Luigi will provide them with an intuitive web interface through which they can visualize tasks and handle dependencies.

This solution is slower to develop than Airflow and has some gaps in the documentation. Still, the balance of the cost, ease of use, and features make Luigi a smart choice for those who are satisfied with the basic capabilities of running pipelines.

How to Choose the Best Python Framework for ETL Pipeline

You should understand that each currently existing Python solution is tailored for specific goals. Therefore, when choosing a particular Python framework for building ETL pipelines, you will need to consider the following:

- What scale of network infrastructure is it designed for?

- What limitations does it have in integration with third-party software products?

- What types of data warehouses can it interact with?

- How easy is it to use?

How Could Visual Flow Help?

IBA Group is a leading software development company with 13 centers in the Czech Republic, Kazakhstan, Bulgaria, Poland, and the Slovak Republic and 2,700+ multilingual employees with decades of expertise. In addition to long-established technologies, the company successfully applies in its projects such trends as machine learning and artificial intelligence, computer vision, data science, data engineering, the Internet of things, robotic process automation, blockchain, digital twins, industry 4.0, and many others.

Among the company’s most famous clients are IBM, Fujitsu, Lenovo, Panasonic, Coca-Cola, etc. As for the list of business partners, it includes leaders of the digital market like Microsoft, SAP, Red Hat, Salesforce, etc. The company’s portfolio currently includes 2,000+ projects for customers from 40+ countries, and these numbers are constantly growing.

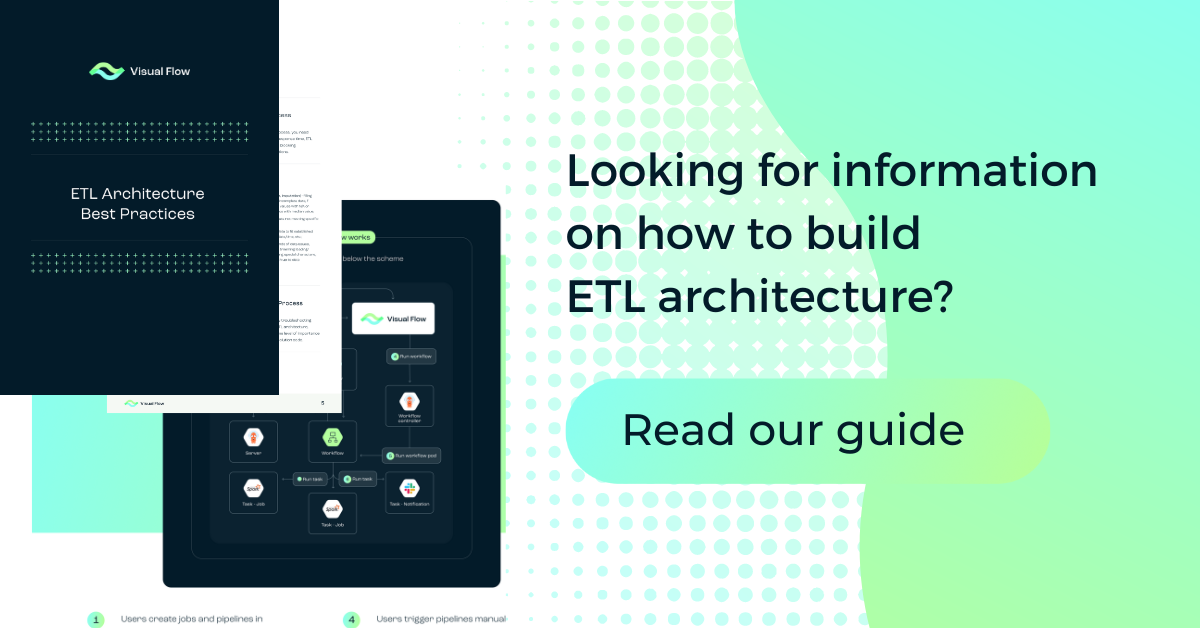

Aside from custom software development, deployment, and support services, the company also creates projects in collaboration with digital giants such as Amazon. In particular, for implementing ETL pipelines, IBA Group launched its own open-source Visual Flow product, which is now available to the public.

IBA Group experts managed to create a solution that combines the best features of Kubernetes, Spark, and Argo. Specifically, cloud-based Visual Flow provides an intuitive GUI to build ETL processes, connect them to data processing pipelines, run and schedule them, as well as monitor their execution.

Learn more about the features of this cost-effective solution on the AWS website.

Conclusion

We hope that we have helped you choose the most suitable Python framework for ETL to work with your data warehouse. Contact us, if you need more professional help to optimize your digital infrastructure.

FAQ

Python is a dominating programming language in ETL solutions development. For now, there are hundreds of Python ETL tools, such as frameworks, libraries, etc.

To decide which Python ETL framework will suit you best, you should consider your company’s size, the specifics of your data warehouses, and the limitations of that particular solution.

Among the dozens of advanced ETL Python frameworks, we would highlight the following five:

- Bonobo

- Pygrametl;

- Mara;

- Apache Airflow;

- Luigi.

Contact us